Big O notation

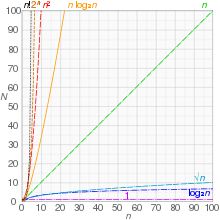

The letter O was chosen by Bachmann to stand for Ordnung, meaning the order of approximation.[3] In analytic number theory, big O notation is often used to express a bound on the difference between an arithmetical function and a better understood approximation; a famous example of such a difference is the remainder term in the prime number theorem.are both required to be functions from some unbounded subset of the positive integers to the nonnegative real numbers; thenIn this setting, the contribution of the terms that grow "most quickly" will eventually make the other ones irrelevant.As a result, the following simplification rules can be applied: For example, let f(x) = 6x4 − 2x3 + 5, and suppose we wish to simplify this function, using O notation, to describe its growth rate as x approaches infinity.There are two formally close, but noticeably different, usages of this notation:[citation needed] This distinction is only in application and not in principle, however—the formal definition for the "big O" is the same for both cases, only with different limits for the function argument.For example, the time (or the number of steps) it takes to complete a problem of size n might be found to be T(n) = 4n2 − 2n + 2.Additionally, the number of steps depends on the details of the machine model on which the algorithm runs, but different types of machines typically vary by only a constant factor in the number of steps needed to execute an algorithm.So the big O notation captures what remains: we write either or and say that the algorithm has order of n2 time complexity.One that grows more slowly than any exponential function of the form cn is called subexponential.This is not the only generalization of big O to multivariate functions, and in practice, there is some inconsistency in the choice of definition.Some consider this to be an abuse of notation, since the use of the equals sign could be misleading as it suggests a symmetry that this statement does not have.[9] Knuth describes such statements as "one-way equalities", since if the sides could be reversed, "we could deduce ridiculous things like n = n2 from the identities n = O[ n2 ] and n2 = O[ n2 ] ".[10] In another letter, Knuth also pointed out that For these reasons, it would be more precise to use set notation and write f(x) ∈ O[ g(x) ] (read as: " f(x) is an element of O[ g(x) ] ", or " f(x) is in the set O[ g(x) ] "), thinking of O[ g(x) ] as the class of all functions h(x) such that |h(x)| ≤ C |g(x)| for some positive real number C.[10] However, the use of the equals sign is customary.Its developers are interested in finding a function T(n) that will express how long the algorithm will take to run (in some arbitrary measurement of time) in terms of the number of elements in the input set.The algorithm works by first calling a subroutine to sort the elements in the set and then perform its own operations.The sort has a known time complexity of O(n2), and after the subroutine runs the algorithm must take an additional 55n3 + 2n + 10 steps before it terminates.Again, this usage disregards some of the formal meaning of the "=" symbol, but it does allow one to use the big O notation as a kind of convenient placeholder.In more complicated usage, O(·) can appear in different places in an equation, even several times on each side.[14][15] Here is a list of classes of functions that are commonly encountered when analyzing the running time of an algorithm.[21] Authors that followed Landau, however, use a different notation for the same definitions:[citation needed] The symbolFor example, if T(n) represents the running time of a newly developed algorithm for input size n, the inventors and users of the algorithm might be more inclined to put an upper asymptotic bound on how long it will take to run without making an explicit statement about the lower asymptotic bound.[6] Inside an equation or inequality, the use of asymptotic notation stands for an anonymous function in the set O(g), which eliminates lower-order terms, and helps to reduce inessential clutter in equations, for example:[33] Another notation sometimes used in computer science is Õ (read soft-O), which hides polylogarithmic factors.[35] Essentially, it is big O notation, ignoring logarithmic factors because the growth-rate effects of some other super-logarithmic function indicate a growth-rate explosion for large-sized input parameters that is more important to predicting bad run-time performance than the finer-point effects contributed by the logarithmic-growth factor(s).This notation is often used to obviate the "nitpicking" within growth-rates that are stated as too tightly bounded for the matters at hand (since logk n is always o(nε) for any constant k and any ε > 0).A generalization to functions g taking values in any topological group is also possible[citation needed].The "limiting process" x → xo can also be generalized by introducing an arbitrary filter base, i.e. to directed nets f and g. The o notation can be used to define derivatives and differentiability in quite general spaces, and also (asymptotical) equivalence of functions, which is an equivalence relation and a more restrictive notion than the relationship "f is Θ(g)" from above.In the 1970s the big O was popularized in computer science by Donald Knuth, who proposed the different notationOn the other hand, in the 1930s,[41] the Russian number theorist Ivan Matveyevich Vinogradov introduced his notationThe big-O originally stands for "order of" ("Ordnung", Bachmann 1894), and is thus a Latin letter.

Orders of approximationScale analysisCurve fittingFalse precisionSignificant figuresApproximationGeneralization errorTaylor polynomialScientific modellinglimiting behaviorfunctionargumentPaul BachmannEdmund Landauorder of approximationcomputer scienceclassify algorithmsanalytic number theoryarithmetical functionprime number theoremupper boundcomplexunboundedsubsetreal numbersabsolute valuelimit superiorlimit pointcluster pointextended real number linepositive integersmathematicsTaylor seriesasymptotic expansioninfiniteinfinitesimalanalyzing algorithmscoefficientsabuse of notationinteger factorizationChebyshev normde Bruijnset notationalgorithmsymmetric relationconstantlookup tableinverse Ackermann functionDisjoint-set data structureinterpolation searchlogarithmicbinary searchbinomial heappolylogarithmicparallel random-access machinek-d treelinearripple carrylog-startriangulationSeidel's algorithmlinearithmicfast Fourier transformcomparison sortheapsortmerge sortquadraticbubble sortselection sortinsertion sortquicksortShellsorttree sortpolynomialTree-adjoining grammarmatchingbipartite graphsdeterminantLU decompositionL-notationsub-exponentialquadratic sievenumber field sieveexponentialtravelling salesman problemdynamic programmingbrute-force searchfactorialLaplace expansionall partitions of a setOmar VizquelOmicrontransitivitycomputational complexity theoryG.H. HardyJ.E. LittlewoodE. LandauDonald KnuthasymptoticallyAnalysis of algorithmsIntroduction to AlgorithmsCormenLeisersonRivestshorthandlogk nlogarithmic factorsgrowth-rateL notationnormed vector spacetopological groupfilter basederivativesdifferentiabilityequivalence relationIvan Matveyevich VinogradovAsymptotic computational complexityAsymptotically optimal algorithmBig O in probability notationLimit inferior and limit superiorMaster theorem (analysis of algorithms)Nachbin's theoremcomplex analyticintegral transformsOrder of accuracyComputational complexity of mathematical operations